Relation To Other Quantities

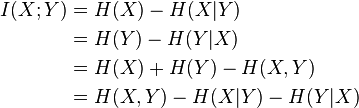

Mutual information can be equivalently expressed as

where H(X) and H(Y) are the marginal entropies, H(X|Y) and H(Y|X) are the conditional entropies, and H(X,Y) is the joint entropy of X and Y. Using the Jensen's inequality on the definition of mutual information, we can show that I(X;Y) is non-negative; so consequently, H(X) ≥ H(X|Y).

Intuitively, if entropy H(X) is regarded as a measure of uncertainty about a random variable, then H(X|Y) is a measure of what Y does not say about X. This is "the amount of uncertainty remaining about X after Y is known", and thus the right side of the first of these equalities can be read as "the amount of uncertainty in X, minus the amount of uncertainty in X which remains after Y is known", which is equivalent to "the amount of uncertainty in X which is removed by knowing Y". This corroborates the intuitive meaning of mutual information as the amount of information (that is, reduction in uncertainty) that knowing either variable provides about the other.

Note that in the discrete case H(X|X) = 0 and therefore H(X) = I(X;X). Thus I(X;X) ≥ I(X;Y), and one can formulate the basic principle that a variable contains at least as much information about itself as any other variable can provide.

Mutual information can also be expressed as a Kullback-Leibler divergence, of the product p(x) × p(y) of the marginal distributions of the two random variables X and Y, from p(x,y) the random variables' joint distribution:

Furthermore, let p(x|y) = p(x, y) / p(y). Then

Thus mutual information can also be understood as the expectation of the Kullback-Leibler divergence of the univariate distribution p(x) of X from the conditional distribution p(x|y) of X given Y: the more different the distributions p(x|y) and p(x), the greater the information gain.

Read more about this topic: Mutual Information

Famous quotes containing the words relation to, relation and/or quantities:

“We must get back into relation, vivid and nourishing relation to the cosmos and the universe. The way is through daily ritual, and is an affair of the individual and the household, a ritual of dawn and noon and sunset, the ritual of the kindling fire and pouring water, the ritual of the first breath, and the last.”

—D.H. (David Herbert)

“There is a certain standard of grace and beauty which consists in a certain relation between our nature, such as it is, weak or strong, and the thing which pleases us. Whatever is formed according to this standard pleases us, be it house, song, discourse, verse, prose, woman, birds, rivers, trees, room, dress, and so on. Whatever is not made according to this standard displeases those who have good taste.”

—Blaise Pascal (1623–1662)

“The Walrus and the Carpenter

Were walking close at hand:

They wept like anything to see

Such quantities of sand:

“If this were only cleared away,”

They said, “it would be grand!”

“If seven maids with seven mops

Swept it for half a year,

Do you suppose,” the Walrus said,

“That they could get it clear?”

“I doubt it,” said the Carpenter,

And shed a bitter tear.”

—Lewis Carroll [Charles Lutwidge Dodgson] (1832–1898)