Definition

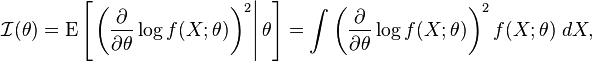

The Fisher information is a way of measuring the amount of information that an observable random variable X carries about an unknown parameter θ upon which the probability of X depends. The probability function for X, which is also the likelihood function for θ, is a function f(X; θ); it is the probability mass (or probability density) of the random variable X conditional on the value of θ. The partial derivative with respect to θ of the natural logarithm of the likelihood function is called the score. Under certain regularity conditions, it can be shown that the first moment of the score (that is, its expected value) is 0. The second moment is called the Fisher information:

where, for any given value of θ, the expression E denotes the conditional expectation over values for X with respect to the probability function f(x; θ) given θ. Note that . A random variable carrying high Fisher information implies that the absolute value of the score is often high. The Fisher information is not a function of a particular observation, as the random variable X has been averaged out.

Since the expectation of the score is zero, the Fisher information is also the variance of the score.

If log f(x; θ) is twice differentiable with respect to θ, and under certain regularity conditions, then the Fisher information may also be written as

Thus, the Fisher information is the negative of the expectation of the second derivative with respect to θ of the natural logarithm of f. Information may be seen to be a measure of the "curvature" of the support curve near the maximum likelihood estimate of θ. A "blunt" support curve (one with a shallow maximum) would have a low negative expected second derivative, and thus low information; while a sharp one would have a high negative expected second derivative and thus high information.

Information is additive, in that the information yielded by two independent experiments is the sum of the information from each experiment separately:

This result follows from the elementary fact that if random variables are independent, the variance of their sum is the sum of their variances. Hence the information in a random sample of size n is n times that in a sample of size 1 (if observations are independent).

The information provided by a sufficient statistic is the same as that of the sample X. This may be seen by using Neyman's factorization criterion for a sufficient statistic. If T(X) is sufficient for θ, then

for some functions g and h. See sufficient statistic for a more detailed explanation. The equality of information then follows from the following fact:

which follows from the definition of Fisher information, and the independence of h(X) from θ. More generally, if T = t(X) is a statistic, then

with equality if and only if T is a sufficient statistic.

Read more about this topic: Fisher Information

Famous quotes containing the word definition:

“It is very hard to give a just definition of love. The most we can say of it is this: that in the soul, it is a desire to rule; in the spirit, it is a sympathy; and in the body, it is but a hidden and subtle desire to possess—after many mysteries—what one loves.”

—François, Duc De La Rochefoucauld (1613–1680)

“It’s a rare parent who can see his or her child clearly and objectively. At a school board meeting I attended . . . the only definition of a gifted child on which everyone in the audience could agree was “mine.””

—Jane Adams (20th century)

“The physicians say, they are not materialists; but they are:MSpirit is matter reduced to an extreme thinness: O so thin!—But the definition of spiritual should be, that which is its own evidence. What notions do they attach to love! what to religion! One would not willingly pronounce these words in their hearing, and give them the occasion to profane them.”

—Ralph Waldo Emerson (1803–1882)